Convolutional Neural Networks in Search Engine Optimization

In this article, we will explore the basics of convolutional neural networks (CNNs), a popular type of neural network used for image recognition tasks. We will discuss how CNNs work and how they differ from traditional neural networks in terms of architecture and training.

We will also delve into some of the key considerations when designing and training a CNN, such as choosing the number of filters and kernel size, preventing overfitting, and handling imbalanced datasets.

Finally, we will touch upon the potential applications of CNNs in natural language processing and their potential impact on search engine optimization (SEO).

Convolutional neural networks (CNNs) have become a staple of the machine learning community due to their impressive performance on a wide range of image recognition tasks. From classifying objects in photographs to detecting faces in videos, CNNs have demonstrated their capabilities time and time again. In this article, we will explore the inner workings of CNNs and discuss how they differ from traditional neural networks.

We will also cover some of the key considerations when designing and training a CNN, such as choosing the number of filters and kernel size, preventing overfitting, and handling imbalanced datasets.

Finally, we will examine the potential applications of CNNs in natural language processing and their potential impact on search engine optimization (SEO).

What is a Convolutional Neural Network (CNN) and How Does it Work?

A convolutional neural network (CNN) is a type of artificial neural network specifically designed for processing data that has a grid-like topology, such as an image.

CNNs are inspired by the structure and function of the visual cortex, which is the part of the brain responsible for processing visual information.

CNNs are composed of several layers of interconnected neurons, which process and transform the input data through a series of mathematical operations. The layers of a CNN are typically organized into three types: convolutional layers, pooling layers, and fully connected layers.

The first layer of a CNN is the input layer, which takes in the raw input data such as an image. The input layer is followed by one or more convolutional layers, which perform a set of convolutional operations on the input data. These operations involve applying a set of learnable filters or kernels to the input data, which are designed to detect specific patterns or features in the data.

For example, a kernel that is designed to detect edges in an image would convolve over the input image by sliding the kernel over the image and computing the dot product between the entries of the kernel and the overlapping entries of the image. This results in a new feature map, which is a transformed version of the input image that highlights the locations of the detected edges.

Multiple convolutional layers can be stacked on top of each other, each applying a different set of filters to the input data and producing its own feature map. These feature maps are then passed through pooling layers, which downsample the feature maps by taking the maximum or average value of a group of adjacent cells. Pooling layers help to reduce the size of the feature maps and reduce the computational complexity of the CNN.

After the pooling layers, the feature maps are passed through one or more fully connected layers, which are similar to the fully connected layers in a traditional neural network. These layers perform a linear combination of the input features and apply a nonlinear activation function to produce the final output of the CNN.

One of the key advantages of CNNs is their ability to learn hierarchical representations of the input data. By applying multiple convolutional and pooling layers, a CNN can learn to detect more complex patterns and features in the data at higher levels of abstraction. This allows CNNs to achieve state-of-the-art results on a wide range of tasks, including image classification, object detection, and segmentation.

Another advantage of CNNs is their ability to learn translation invariance, which means that they can recognize patterns and features in the input data regardless of their position within the image. This is achieved through the use of convolutional operations and pooling layers, which allow the CNN to learn local patterns and invariant features that can be detected anywhere in the image.

In summary, a convolutional neural network is a type of artificial neural network that is specifically designed for processing data that has a grid-like topology, such as an image. CNNs consist of multiple layers of interconnected neurons that perform a series of convolutional, pooling, and fully connected operations on the input data to learn hierarchical representations and invariant features. These capabilities make CNNs a powerful tool for tasks such as image classification, object detection, and segmentation.

How Is a CNN Used in Image Recognition Tasks?

A CNN, or Convolutional Neural Network, is a type of artificial neural network specifically designed for image recognition tasks.

It is a machine learning algorithm that is inspired by the way the human brain processes visual information, and it is able to analyze and classify images with a high level of accuracy.

CNNs are composed of layers of interconnected neurons, or artificial "neurons," that are able to analyze and process data. The first layer of a CNN is the input layer, which is responsible for receiving the raw data in the form of an image. This image is then processed through a series of convolutional layers, which use filters to extract features from the image. These features could be edges, shapes, patterns, or any other visual element that can help the CNN identify the object in the image.

After the convolutional layers, the CNN has a series of fully connected layers, also known as the "dense" layers. These layers take the extracted features and use them to classify the image. The dense layers are able to analyze the extracted features in combination with each other, allowing the CNN to make a more informed and accurate classification.

One of the key benefits of using a CNN for image recognition tasks is its ability to process large amounts of data quickly and accurately. CNNs are able to analyze images at a much faster rate than humans, and they are able to do so with a high degree of accuracy. This makes them particularly useful for tasks that involve analyzing large amounts of images, such as facial recognition or object detection.

Another benefit of CNNs is their ability to learn and adapt over time. As the CNN processes more and more images, it is able to improve its accuracy and become more efficient at image recognition tasks. This means that the more data a CNN is exposed to, the better it becomes at identifying objects and patterns in images.

One common use case for CNNs in image recognition tasks is in the field of computer vision. Computer vision is a subfield of artificial intelligence that deals with the ability of computers to interpret and understand visual data. CNNs are often used in computer vision applications to analyze and classify images, such as detecting objects in a scene or identifying patterns in an image.

One example of a computer vision application that uses CNNs is self-driving cars. These vehicles rely on a variety of sensors and cameras to gather visual data about their surroundings. The data is then processed by a CNN, which is able to classify objects in the scene and help the vehicle make informed decisions about its movements.

Another example of a CNN being used in image recognition tasks is in the field of medical imaging. In this case, CNNs are used to analyze medical images, such as X-rays or MRIs, to identify abnormalities or diagnose diseases. This can be especially useful for detecting early stage cancers or other diseases that may not be visible to the human eye.

In summary, a CNN is a powerful tool for image recognition tasks due to its ability to process large amounts of data quickly and accurately. Its ability to learn and adapt over time also makes it particularly useful for tasks that require ongoing analysis of images. CNNs are commonly used in a variety of applications, including computer vision and medical imaging, to classify and identify objects in images.

How Does the Architecture of a CNN Differ From That of a Traditional Neural Network?

Convolutional neural networks (CNNs) are a type of neural network specifically designed to process and analyze data with a grid-like structure, such as images.

Traditional neural networks, on the other hand, are designed to process and analyze data in a more general sense, without a particular focus on data with a specific structure. As a result, the architecture of a CNN differs significantly from that of a traditional neural network in several key ways.

One of the main differences between the architecture of a CNN and a traditional neural network is the presence of convolutional layers in the CNN. These layers are responsible for applying a series of filters to the input data, which are then used to extract relevant features from the data. This process is known as convolution, and it allows the CNN to identify patterns and features in the data that may be difficult for a traditional neural network to detect.

Another key difference between the architecture of a CNN and a traditional neural network is the use of pooling layers in the CNN. These layers are used to reduce the size of the data by applying a downsampling operation, which reduces the number of dimensions in the data while preserving the most important features. This can help to reduce the complexity of the model and improve its performance, as it reduces the number of parameters that need to be learned.

In addition to convolutional and pooling layers, CNNs also typically include fully-connected layers, which are similar to those found in traditional neural networks. These layers are used to process the data and make predictions based on the features extracted by the convolutional and pooling layers. However, in a CNN, the fully-connected layers are typically much smaller and more efficient than in a traditional neural network, as they only need to process the extracted features rather than the entire dataset.

One of the main benefits of the architecture of a CNN is its ability to learn and adapt to the structure of the data. Traditional neural networks are often limited in their ability to process data with a specific structure, such as images, as they lack the specialized layers and techniques used in CNNs to extract relevant features. This makes CNNs particularly useful for tasks such as image classification, where they can learn to recognize patterns and features in the data that may be difficult for a traditional neural network to detect.

Another key benefit of the architecture of a CNN is its ability to handle large amounts of data. Traditional neural networks can be prone to overfitting, particularly when working with large datasets, as they may struggle to generalize the data to new examples. CNNs, on the other hand, are designed to be more efficient and better able to handle large amounts of data, thanks to the use of convolutional and pooling layers, which can help to reduce the complexity of the model and improve its performance.

Overall, the architecture of a CNN differs significantly from that of a traditional neural network in several key ways. While traditional neural networks are designed to process and analyze data in a more general sense, CNNs are specifically designed to handle and extract features from data with a grid-like structure, such as images. This makes them particularly useful for tasks such as image classification, where they can learn to recognize patterns and features in the data that may be difficult for a traditional neural network to detect.

How Do Convolutional Layers and Pooling Layers Work in a CNN?

Convolutional layers and pooling layers are two important components of a convolutional neural network (CNN), which is a type of artificial neural network that is specifically designed for image recognition tasks.

These layers work together to extract features from the input image and reduce the dimensionality of the data, making it easier for the network to learn patterns and classify images.

Convolutional layers are the primary building blocks of a CNN, and they perform the task of feature extraction. These layers consist of a set of filters, also known as kernels, which are applied to the input image to detect patterns and features. Each filter is a small matrix of weights that is trained to recognize a specific feature, such as edges, corners, or textures.

During the forward pass of the CNN, the convolutional layer applies each filter to the input image by performing a convolution operation. This involves sliding the filter across the image and multiplying the weights of the filter with the pixel values of the image at each position. The resulting output is a feature map, which represents the presence of the feature that the filter was trained to detect.

The size of the filter and the stride, which is the distance between the filter and the image pixels, determines the size of the feature map. Smaller filters and larger strides result in smaller feature maps, while larger filters and smaller strides result in larger feature maps. The number of filters in a convolutional layer determines the number of feature maps that are generated.

Convolutional layers can also be stacked on top of each other, allowing the network to learn more complex features by combining the outputs of multiple filters. This process is known as convolutional stacking, and it helps the network to learn features that are not easily detectable by a single filter.

Pooling layers are used in a CNN to reduce the size of the feature maps and reduce the computational complexity of the network. These layers perform a downsampling operation on the feature maps, which helps to reduce the spatial resolution of the data.

There are two types of pooling layers: max pooling and average pooling. Max pooling selects the maximum value from a group of adjacent pixels in the feature map, while average pooling takes the average of all the values in the group. Both types of pooling reduce the size of the feature map by a factor of 2, which reduces the number of parameters in the network and helps to prevent overfitting.

Pooling layers are typically placed after a convolutional layer, and they are used to extract the most important features from the feature map. By selecting the maximum or average value, pooling layers help to highlight the most prominent features and suppress the less important ones. This helps the network to focus on the most important features and improve its performance.

In summary, convolutional layers and pooling layers work together in a CNN to extract features from the input image and reduce the dimensionality of the data. Convolutional layers apply filters to the input image to detect patterns and features, while pooling layers reduce the size of the feature maps and extract the most important features. These layers help the network to learn patterns and classify images, making it a powerful tool for image recognition tasks.

How Are Weights and Biases Learned in a CNN?

Weights and biases are learned in a convolutional neural network (CNN) through a process called training.

During training, the CNN is presented with a set of input data and corresponding output labels, and it adjusts the weights and biases in order to correctly predict the outputs for future data.

The first step in training a CNN is to initialize the weights and biases to random values. This is done to ensure that the network does not start with any biases towards certain outcomes. The weights and biases are then adjusted through the use of an optimization algorithm, such as stochastic gradient descent (SGD).

SGD works by calculating the error between the predicted output of the CNN and the actual output label. This error is then used to update the weights and biases in a way that reduces the error for future predictions. The update is done using a learning rate, which determines the size of the adjustment made to the weights and biases.

As the CNN processes more and more data during training, the weights and biases are continually adjusted to minimize the error. This process is repeated for each input data point in the training set.

The accuracy of the CNN's predictions can be measured using a loss function, which calculates the difference between the predicted output and the actual output label. The loss function is used to guide the optimization process and ensure that the weights and biases are being updated in the right direction.

There are several factors that can affect the learning process in a CNN. One important factor is the size of the training data set. A larger training data set allows the CNN to learn more about the patterns and relationships in the data, which can improve its accuracy. However, a larger training data set also requires more computational resources, which can slow down the training process.

Another factor that can impact the learning process is the complexity of the CNN itself. A CNN with more layers and more parameters will be able to learn more complex patterns in the data, but it will also require more computational resources and may be more prone to overfitting, which is when the CNN becomes too specialized for the training data and performs poorly on new data.

The optimization algorithm used to adjust the weights and biases can also have an impact on the learning process. Different algorithms have different strengths and weaknesses, and choosing the right algorithm can significantly improve the accuracy of the CNN.

Finally, the hyperparameters of the CNN, such as the learning rate and the batch size, can also affect the learning process. Choosing the right hyperparameters can help the CNN learn more effectively and avoid common pitfalls such as overfitting or slow convergence.

In conclusion, weights and biases in a CNN are learned through a process of training and optimization. The CNN is presented with input data and corresponding output labels, and the weights and biases are adjusted in order to minimize the error between the predicted and actual outputs. The size of the training data set, the complexity of the CNN, the optimization algorithm used, and the hyperparameters all play a role in the learning process.

How Do You Decide on the Number of Filters and Kernel Size for a Convolutional Layer in a CNN?

Deciding on the number of filters and kernel size for a convolutional layer in a CNN involves trade-offs between the complexity of the model, the amount of computation required, and the ability of the model to capture relevant features from the input data.

In general, increasing the number of filters and kernel size can lead to a more powerful model that is able to capture more intricate features, but it also increases the number of trainable parameters and the computational cost of the model.

On the other hand, decreasing the number of filters and kernel size can lead to a simpler model with fewer trainable parameters and lower computational cost, but it may also result in a model that is less able to capture relevant features from the input data.

One approach to deciding on the number of filters and kernel size is to start with relatively small values and gradually increase them, testing the model's performance on a validation set at each step. This allows you to identify the point at which the model's performance starts to plateau or degrade, indicating that further increases in the number of filters or kernel size are not likely to be beneficial.

Another approach is to use domain knowledge or expert intuition to guide the selection of the number of filters and kernel size. For example, if you are working with image data, you may want to use a larger kernel size for the initial layers of the CNN, since this can help the model capture larger, more general features such as edges and shapes. As you move deeper into the network, you may want to use smaller kernel sizes to capture more detailed, fine-grained features. Similarly, you may want to increase the number of filters in the deeper layers of the network, since this can help the model capture more complex features that are composed of combinations of simpler features extracted by the earlier layers.

It is also important to consider the size of the input data and the amount of computation that you are willing to invest in the model. For large input data, you may want to use a larger kernel size and a larger number of filters in order to capture as much information as possible. However, this will also increase the computational cost of the model, so you will need to balance this against the resources that you have available.

In general, it is a good idea to experiment with different combinations of kernel size and number of filters to see which works best for your particular dataset and task. This may involve some trial and error, but the reward is a more powerful and effective model that is able to capture the relevant features of the input data.

How Do You Prevent Overfitting in a CNN?

Overfitting in a Convolutional Neural Network (CNN) occurs when the model becomes too complex and begins to learn patterns that are specific to the training data, rather than general patterns that can be applied to unseen data.

This can lead to poor performance on the validation or test set, as the model may not be able to accurately classify new examples.

There are several ways to prevent overfitting in a CNN:

- Use regularization techniques: Regularization techniques such as L1 and L2 regularization can help prevent overfitting by adding a penalty term to the loss function that reduces the complexity of the model.

- Use dropout layers: Dropout layers randomly drop a percentage of neurons during training, which helps to prevent the model from becoming too reliant on any one neuron. This can improve generalization and reduce overfitting.

- Use early stopping: Early stopping is a technique that involves monitoring the performance of the model on the validation set during training, and stopping the training process when the performance begins to degrade. This can help prevent the model from overfitting to the training data.

- Use data augmentation: Data augmentation involves creating new training examples by applying transformations such as rotation, scaling, and cropping to existing examples. This can help to increase the variety of training examples and reduce overfitting.

- Use a smaller model: A smaller model with fewer parameters is less likely to overfit, as there are fewer parameters to learn. However, it is important to strike a balance between model size and performance, as a model that is too small may not have sufficient capacity to accurately classify examples.

- Use weight decay: Weight decay is a technique that involves adding a penalty term to the loss function that penalizes large weights. This can help to prevent the model from learning overly complex patterns and reduce overfitting.

- Use cross-validation: Cross-validation involves dividing the training data into a number of folds, training the model on each fold, and evaluating the model on the remaining fold. This can help to identify any overfitting that may occur during training, and allow for adjustments to be made to the model.

Overall, it is important to carefully monitor the performance of the model on the validation set during training and make adjustments as necessary to prevent overfitting. A combination of the above techniques can be effective in reducing overfitting and improving the generalization of the model.

How Do You Handle Imbalanced Datasets in a CNN?

Imbalanced datasets can be a problem in a CNN (convolutional neural network) because they can cause the model to become biased towards the majority class.

This can lead to poor performance on the minority class, which is often the class of interest in many applications such as fraud detection or medical diagnosis.

Here are several strategies for handling imbalanced datasets in a CNN:

- Oversampling the minority class: One approach to dealing with imbalanced datasets is to oversample the minority class by generating additional synthetic samples using techniques such as SMOTE (Synthetic Minority Oversampling Technique). This can help to balance the dataset by increasing the number of samples in the minority class. However, this approach may also lead to overfitting if the synthetic samples are not representative of the real data.

- Undersampling the majority class: Another approach is to undersample the majority class by randomly selecting a subset of the majority class samples. This can help to balance the dataset, but it may also lead to the loss of valuable information in the majority class.

- Weighted loss function: Another approach is to use a weighted loss function, where the loss for the minority class is given a higher weight compared to the loss for the majority class. This can help to give more emphasis to the minority class, which can improve the performance on the minority class.

- Stratified sampling: Another approach is to use stratified sampling, which ensures that the proportions of each class are maintained in the train and validation sets. This can help to prevent the model from becoming biased towards the majority class.

- Data augmentation: Data augmentation can be used to increase the number of samples in the minority class by applying transformations such as rotation, scaling, and cropping to the existing samples. This can help to improve the model's generalization ability and reduce overfitting.

- Class balancing techniques: There are several class balancing techniques that can be used to handle imbalanced datasets in a CNN. One approach is to use a re-sampling method, which involves sampling the majority and minority classes to create a balanced dataset. Another approach is to use a class weighting method, which involves assigning different weights to the samples in each class based on their frequency.

- Preprocessing techniques: Preprocessing techniques such as normalization and standardization can also be used to handle imbalanced datasets in a CNN. Normalization involves scaling the data to a common range, while standardization involves transforming the data to have a mean of zero and a standard deviation of one. These techniques can help to improve the model's performance by reducing the impact of large scale differences in the data.

- Ensemble methods: Ensemble methods such as bagging and boosting can also be used to handle imbalanced datasets in a CNN. Bagging involves training multiple models on different subsets of the data, while boosting involves training multiple models sequentially, with each model focusing on the samples that were misclassified by the previous model. These methods can help to reduce the impact of imbalanced datasets by aggregating the predictions of multiple models.

In conclusion, there are several strategies that can be used to handle imbalanced datasets in a CNN. These strategies include oversampling and undersampling the minority and majority classes, using a weighted loss function, stratified sampling, data augmentation, class balancing techniques, preprocessing techniques, and ensemble methods. It is important to carefully evaluate the pros and cons of each approach and choose the one that best fits the specific needs of the problem at hand.

Can a CNN Be Used for Natural Language Processing Tasks, Such as Sentiment Analysis or Text Classification?

Convolutional Neural Networks, or CNNs, are a type of artificial neural network that are particularly well-suited for image recognition tasks.

They are designed to process visual data by using a series of convolutional filters to extract features from the input image and then using these features to classify the image.

However, CNNs can also be used for natural language processing tasks such as sentiment analysis and text classification. While they may not be as effective as some other types of neural networks, such as Long Short-Term Memory (LSTM) networks, CNNs can still provide good results for these tasks.

One way that CNNs can be used for natural language processing tasks is by converting the text into a numerical representation that can be processed by the network. This can be done using techniques such as word embedding, which maps words to a high-dimensional vector space based on their meanings. These vectors can then be used as input to a CNN.Another approach is to use a CNN to process the text directly, without converting it to a numerical representation. This can be done by using the CNN to learn a set of filters that are capable of extracting features from the text. These features can then be used to classify the text or to perform sentiment analysis.

One advantage of using CNNs for natural language processing tasks is that they are able to process large amounts of data very quickly. This makes them well-suited for tasks such as text classification, where large datasets are often used. Additionally, CNNs are able to learn from data without the need for extensive feature engineering, which can be a time-consuming process for other types of neural networks.

However, there are also some drawbacks to using CNNs for natural language processing tasks. One major limitation is that CNNs are not as good at processing sequential data as other types of neural networks, such as LSTM networks. This can make them less effective at tasks such as language translation or language generation, where the order of the words in a sentence is important.

Another limitation of CNNs is that they are not as good at capturing long-term dependencies in the data. For example, if a word that appears later in a sentence has an impact on the meaning of a word that appears earlier in the sentence, a CNN may not be able to capture this relationship. This can make them less effective at tasks such as sentiment analysis, where the meaning of a word can be affected by words that appear earlier in the text.

Despite these limitations, CNNs can still be used effectively for natural language processing tasks such as sentiment analysis and text classification. In many cases, they can provide good results even when used in combination with other types of neural networks, such as LSTM networks.

Overall, while CNNs may not be the best choice for all natural language processing tasks, they can still be a useful tool for these tasks in certain situations. They are particularly well-suited for tasks that require the processing of large amounts of data quickly, and they can provide good results even when used in combination with other types of neural networks.

Can a Convolutional Neural Network Be Used to Improve SEO?

CNN (Convolutional Neural Network) is a type of artificial intelligence that is used for image and video recognition. It is a deep learning model that is based on the structure of the human brain and is able to analyze and understand images and videos in a way that is similar to how humans do.

CNNs have been used in a variety of applications, including facial recognition, object detection, and image classification.

They have also been used to improve search engine optimization (SEO) by helping search engines understand the content of images and videos on websites.

There are a few different ways that a CNN can be used to improve SEO:

- Image tagging: One way that a CNN can be used to improve SEO is by helping to accurately tag images on a website. When images are properly tagged, search engines can understand what the image is about and can use that information to improve the ranking of the website in search results. For example, if a website has an image of a dog and the CNN accurately tags it as a "dog," the search engine will understand that the image is related to dogs and may rank the website higher for searches related to dogs.

- Image optimization: In addition to tagging images, a CNN can also be used to optimize the images on a website for SEO. This can include things like reducing the file size of the image to improve page load times and adding alt text to the image to provide additional context for search engines.

- Video recognition: CNNs can also be used to improve SEO by helping search engines understand the content of videos on a website. This can include things like transcribing the audio of the video and adding closed captions to the video to make it more accessible to users. It can also involve accurately tagging the video with keywords that are relevant to the content of the video.

- Image search: CNNs can also be used to improve SEO by helping search engines understand the content of images and videos in image search results. This can include things like accurately identifying objects in an image or accurately transcribing the audio of a video.

Overall, there are many ways that a CNN can be used to improve SEO. By helping search engines understand the content of images and videos on a website, a CNN can help improve the ranking of the website in search results and increase the visibility of the website to potential users.

Additionally, by optimizing images and videos for SEO and improving the accessibility of these types of content, a CNN can help to improve the user experience on a website and increase the likelihood that users will stay on the website longer, which can also help to improve the website's ranking in search results.

How Search Engine Models Use Convolutional Neural Networks

Convolutional neural networks (CNNs) are a type of artificial neural network specifically designed for image recognition and processing. They are widely used in a variety of applications, including search engines, so it naturally is a part of Market Brew's search engine modeling platform.

In search engine models, CNNs can be used to improve image search functionality by allowing the search engine model to understand and interpret the content of images.

For example, if a query is for "cat," the search engine model can use a CNN to scan through images in the model to identify those that contain cats.

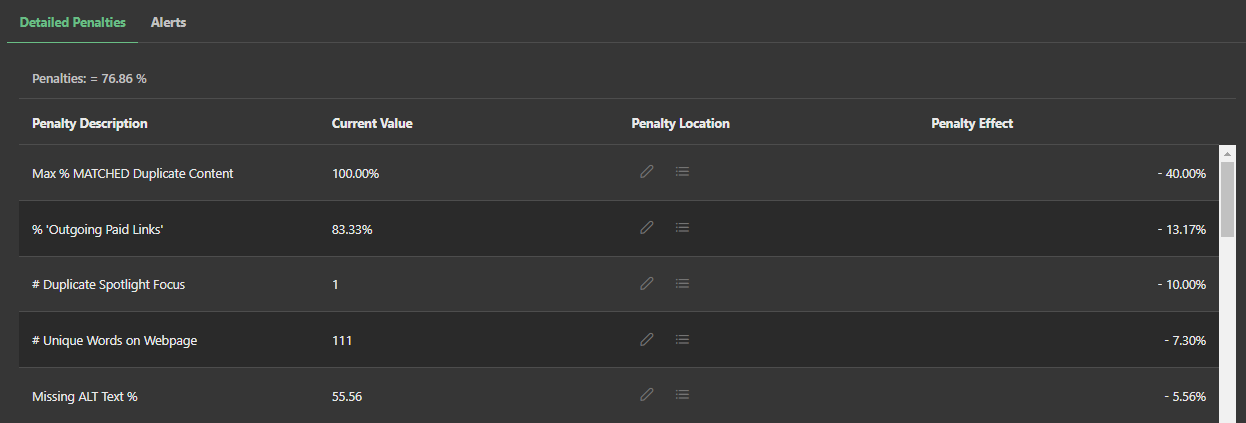

The knowledge of CNNs can also be used to improve the overall performance of a site. Many performance algorithms, such as the Core Web Vitals algorithms, can measure the effectiveness of images and their load times in various formats.

By using CNNs to analyze and understand the formats of images, a search engine model can monitor different image characteristics that may be useful when triaging a problem with performance algorithms like the Core Web Vitals.

In addition to image search and performance optimization, CNNs can also be used in search engine models to improve the accuracy of text recognition.

For example, if a query is searching for a particular phrase or word that happens to be in an image, a CNN can be used to analyze the image and extract the relevant text, similar to how it does with the ALT text attribute. This can be particularly useful in cases where the text within an image is difficult to read or is written in a foreign language.

Overall, the use of CNNs in search engine models can greatly improve the accuracy and effectiveness of image search optimization.

By allowing search engine models to better understand and interpret the content of images, CNNs can help Market Brew provide a more powerful SEO testing platform for users, ultimately enhancing their optimization experience.

Ready to Take Control of Your SEO?

See how Market Brew's predictive SEO models and expert team can unlock new opportunities for your site. Get tailored insights on how we can help your business rise above the competition.

Schedule a demonstration today via our Menu Button and Contact Form to discover how we engineer SEO success.

You May Also Like:

Guides & Videos

Others

Digital Market Strategy Best Practices

Guides & Videos

SEO Unsupervised Learning

Guides & Videos

Embeddings and Centroids as Extractive Content for SEO

From ambiguity to actionable insight.

Decode ranking systems, surface leverage points, and deploy with clarity.